April 18, 2025 at 5:49 AM

I saw an interesting thing pop up lately—why copy pasting code into an LLM is better than using AI-assisted coding tools such as GitHub Copilot.

The reason boils down to token compression.

Token compression is the technique to limit the number of tokens sent to the LLM. When a code base gets large, token compression makes a huge difference in terms of speed and cost. If you’re using a all-you-can-code (flat-fee) AI-assisted coding tool, it is in the best interest of the provider to compress your code as much as possible.

But what do you leave out? When applying token compression to text, the choice is easy: leave out the the’s, and’s, and so on. With code, the choice is more difficult.

And that’s why copy pasting the whole code base to an LLM, can produce better results: the LLM will have all relevant tokens available, not just a subselection!

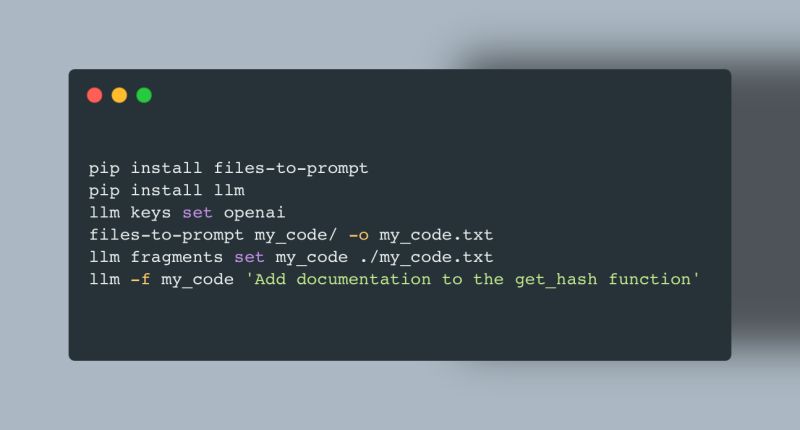

The quickest way to try it out is the the amazing files-to-prompt and llm libraries from Simon Willison. See the image for a quick start!

https://github.com/simonw/files-to-prompt https://github.com/simonw/llm