September 19, 2022 at 10:04 AM

A cautionary tale of A/B testing gone wrong.

If you’re reading this on LinkedIn, you probably know what A/B testing is. In its most common form, exposing your users to two variants of your site and measuring which version better performa along a set of metrics.

The winning version gets implemented.

However, what you measure makes all the difference.

Naïve teams make an assumption, and measure only metrics related to that assumption, f.e. clicks or buy-rate.

Experienced teams monitor a much wider set of metrics to ensure harm is not done elsewhere in the system.

What do you mean by elsewhere?

Let’s say this new variant of the site increases the buy-rate (e.g., we’re going to sell more items).

However, it might decrease the average margin, as more low-margin items are bought. Effectively harming the business.

It’s important to keep an eye on both.

But… even experienced teams often only monitor metrics that are very closely related to the business and not to the user experience.

What?

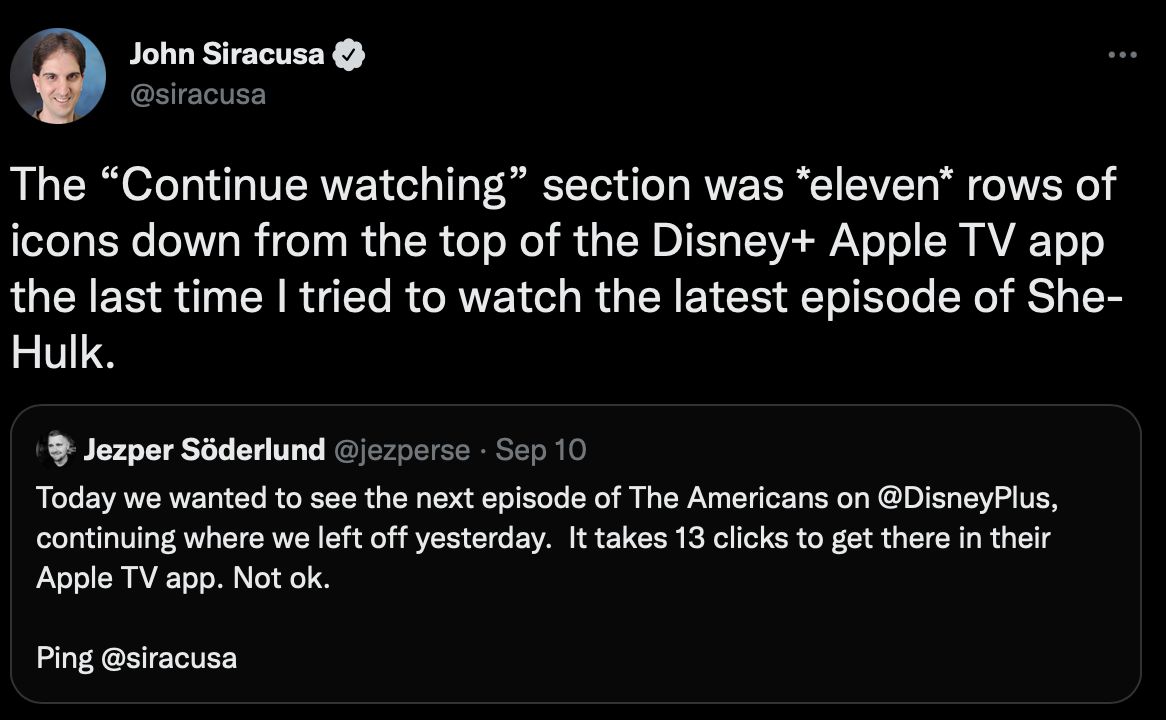

Lately there has been some commotion about how hard it is to reach the “Continue Watching” section of major streaming services (see tweet).

On the surface, it was a good decision, taken after proper A/B testing. By exposing users to different content, they might discover new stuff to watch, increasing their loyalty to your service.

But if you don’t measure the frustration of clicking 13 times, you’ll never know how harmful this new design is.

Harmful?

If I hate your app, the next chance I get to switch service or to bad mouth you, will be taken. This is 100x worse than discovering a new series when you were looking for that damn last episode.

It’s like leaving a VHS half-way in your recorder on Friday night, only to get back on Saturday night and find that someone rewound it, put it back in a place you don’t expect, and switched in a new movie you might want to watch. Maddening.

A/B test your model, but don’t forget to optimize for joy!

https://twitter.com/siracusa/status/1568719549935001602

#ml #machinelearning #abtesting

(In case you’re wondering, GoDataDriven | Part of Xebia has an A/B testing training to help you out, developed by the mighty Rogier van der Geer https://godatadriven.academy/training/a-b-testing-and-experiments-training/)