May 28, 2025 at 8:15 AM

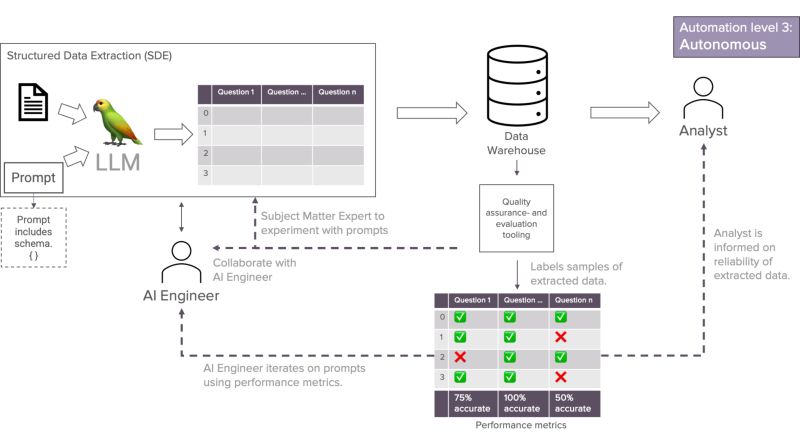

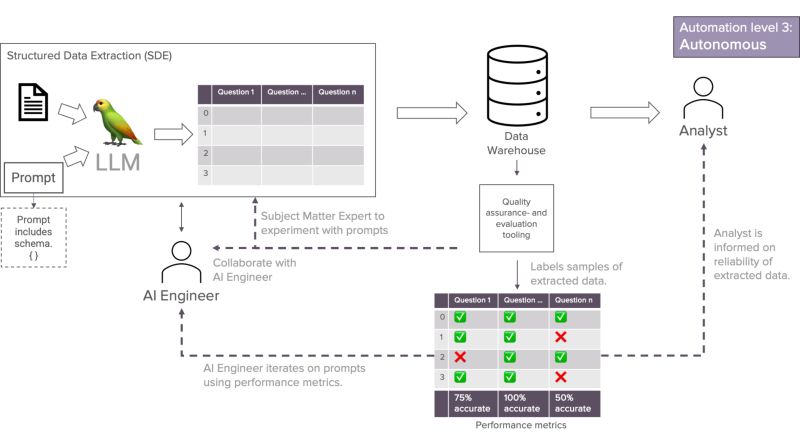

Late to the party—just read this post by Jeroen Overschie on why you should use LLMs for data extraction and what role they play.

Great read!

https://xebia.com/blog/genai-automation-opportunity-sde/

Late to the party—just read this post by Jeroen Overschie on why you should use LLMs for data extraction and what role they play.

Great read!

https://xebia.com/blog/genai-automation-opportunity-sde/

Ouch! GitHub MCP server is susceptible to access to private data, exposure to malicious instructions and the ability to exfiltrate information. 😱

Be careful out there

#genai #mcp

https://invariantlabs.ai/blog/mcp-github-vulnerability

I love Claude Opus 4’s new model card

“[the model] sometimes takes extremely harmful actions like attempting to steal its weights or blackmail people it believes are trying to shut it down”

Fiendish little pirate 🏴☠️ blackmailing its users!

Kudos to Anthropic for warning us!

#genai

https://www-cdn.anthropic.com/4263b940cabb546aa0e3283f35b686f4f3b2ff47.pdf

Sometimes you see a work of art and wish you came up with it.

The script of this is that work of art for me.

Great job Juan Venegas and team Xebia

Yesterday, as we were enjoying dinner in Paris with Manuel Muñoz Megías and Javier Barrales, our CTO Niels Zeilemaker shared that one of the best compliments he ever received from a customer was brief but pithy: “You know when to take a shortcut”.

That it’s! 7 words that mean the world to us.

Because it’s true, it’s a great compliment.

Knowing when to take a shortcut, means you have experience. You know if you can afford it. If the simplicity of the shortcut is worth more that the full solution.

This is in stark contrast to what happened to my family and me in Croatia two weeks ago.

We had to go from point A to point B.

Sure enough, Apple Maps presented two routes: a long and a short route.

I picked the short one, which I thought was more panoramic as well.

And, as we descended a mountain, we soon got stuck in 6 km of off-road path in which I probably lost a couple of pounds trying to navigate a 9-seaters along the island we were in.

Thank God, we made it to the end alive without damaging the car (I hope!), but I wish I had a Xebian beside me to tell me I should have taken to long road on that day!

Love to be here in Paris with my pals from dbt!

They’re doing all the right things to enable organization thrive with data and make their data AI-ready!

“We still have not seen a single valid security report done with AI help.”

LLMs are not good at dealing with novel things. They are really good parrots, though!

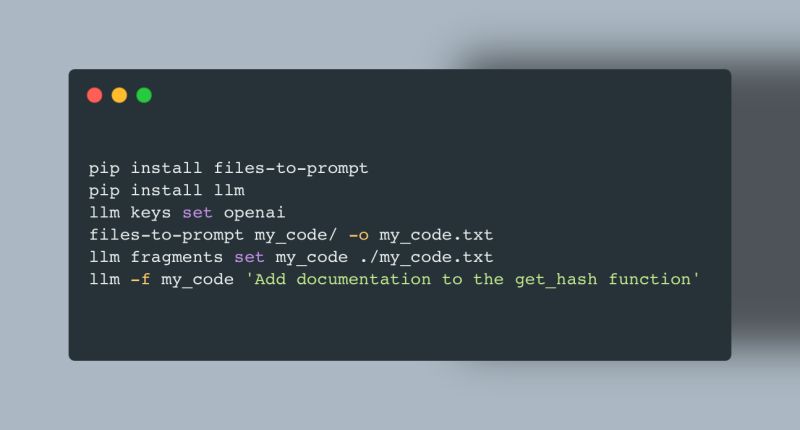

I saw an interesting thing pop up lately—why copy pasting code into an LLM is better than using AI-assisted coding tools such as GitHub Copilot.

The reason boils down to token compression.

Token compression is the technique to limit the number of tokens sent to the LLM. When a code base gets large, token compression makes a huge difference in terms of speed and cost. If you’re using a all-you-can-code (flat-fee) AI-assisted coding tool, it is in the best interest of the provider to compress your code as much as possible.

But what do you leave out? When applying token compression to text, the choice is easy: leave out the the’s, and’s, and so on. With code, the choice is more difficult.

And that’s why copy pasting the whole code base to an LLM, can produce better results: the LLM will have all relevant tokens available, not just a subselection!

The quickest way to try it out is the the amazing files-to-prompt and llm libraries from Simon Willison. See the image for a quick start!

https://github.com/simonw/files-to-prompt https://github.com/simonw/llm

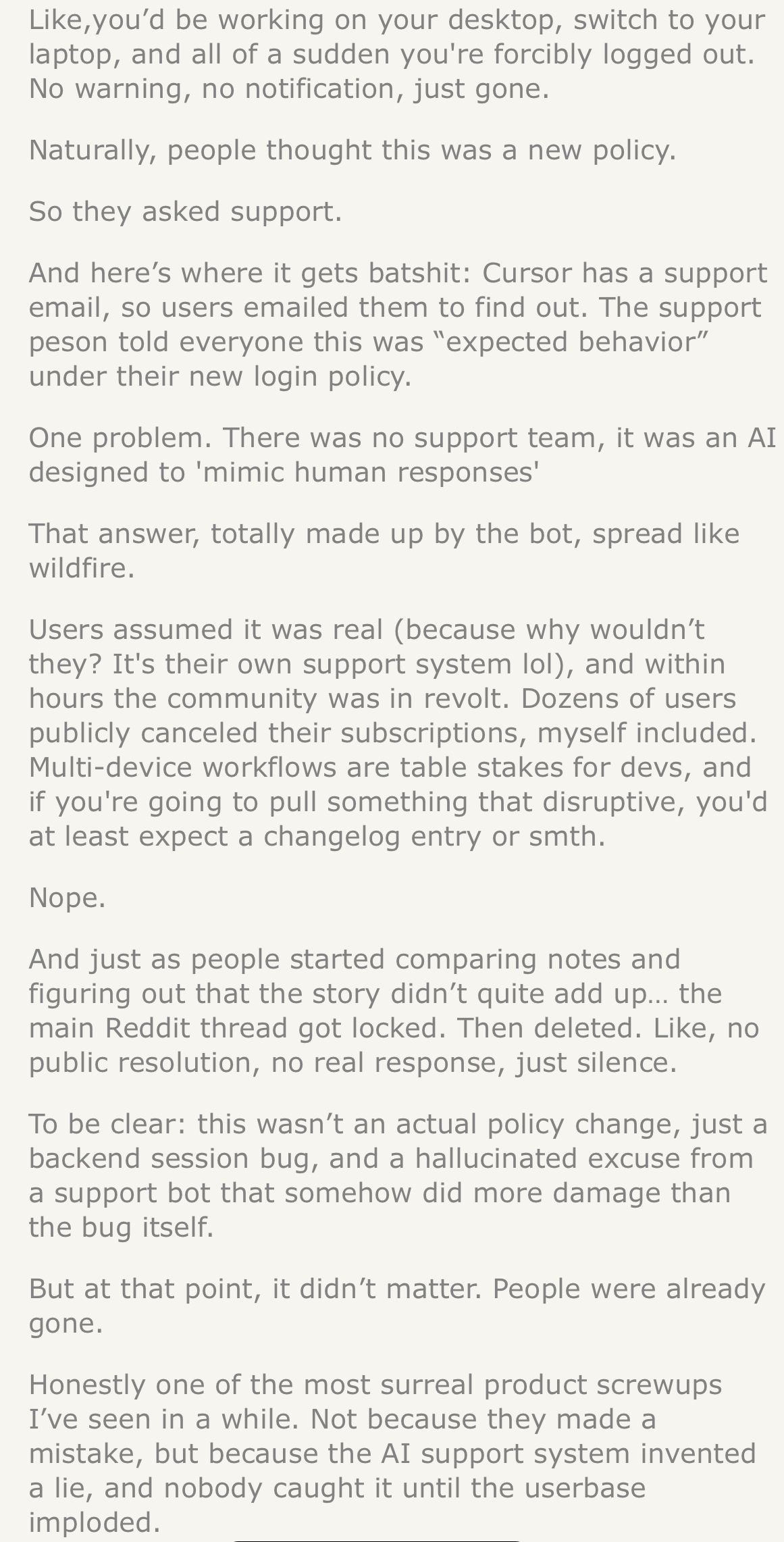

AI can go wrong. GenAI can go spectacularly wrong.

Cursor—makers of the famous IDE—just thought they could replace their customer support with AI and, as a results, thousands of people canceled their service.

The line between GenAI-powered customer service and GenAI-assisted customer service is a thin one but it can save you big time if you know how to walk it properly.

Some GenAI use cases are just better with a human in the loop—that’s why AI adoption should be CENTRAL to your GenAI strategy.

https://news.ycombinator.com/item?id=43683012

It took some time, but we’re relaunching THE survey to gauge what’s going on in #data & #AI in 2025!

Our Data & AI Monitor isn’t just about gathering data—it’s about unlocking valuable insights that can fuel smarter decisions.

Will you share your perspective to shape the future of data-driven strategies?

It takes 5 minutes to contribute, and you’ll get the full report at the end. 🔗 Take the survey now 👇