November 6, 2025 at 11:01 AM

Thrilled that we have been awarded the BeNeLux Top Growth Regional partner by Databricks!

🎉🎊🍾

Thanks team Xebia !

Thrilled that we have been awarded the BeNeLux Top Growth Regional partner by Databricks!

🎉🎊🍾

Thanks team Xebia !

Something’s exciting happening today in Amsterdam: I’m at the Data + AI Databricks World tour.

There’s an announcement in the air—more on that this afternoon 🤫

And it was a blast to listen to Teus Kappen explaining how Xebia helped UMC Utrecht achieve their data and AI vision. 🏆

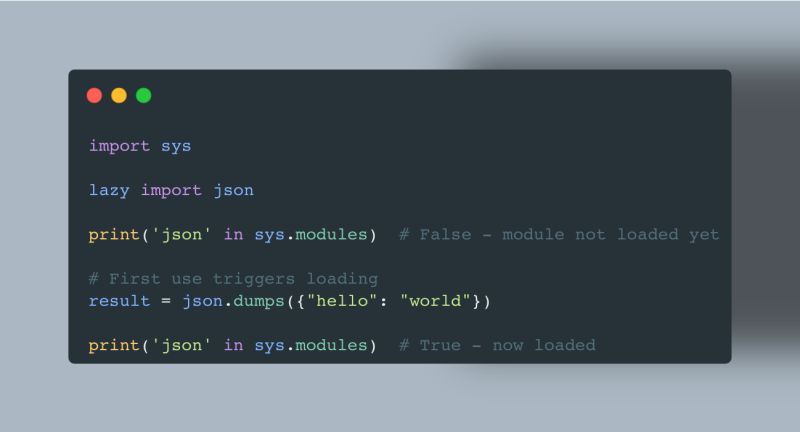

PEP 810 has been approved 🥳

It will be possible to load Python modules lazily, greatly improving startup time and deferring potentially expensive operations only when needed.

Thanks, Pablo Galindo Salgado and co., for putting this forward!

Some serious FOMO after reading this—must have been an amazing evening.

Ritchie Vink has created something amazing with Polars—a sane API powered by ultra fast execution—and Frank Mbonu from Xebia kicked it out of the park when he created Lakewatch https://xebia.com/solutions/lakewatch-databricks-lakehouse-optimization/ to help organizations get insights about their Databricks usage so they can control their cost!

If you speak Dutch, this is a great podcast by my colleague Steven Nooijen on data & AI, new revenue streams unlocked, and why self-service is the way to go!

#sharingknowledge Xebia

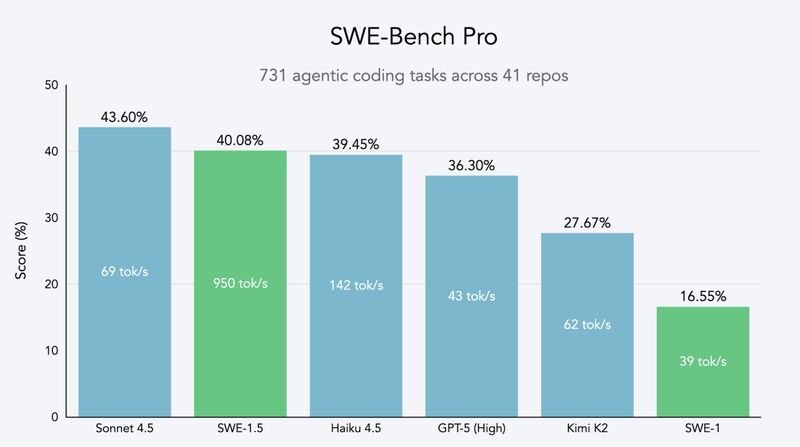

Some big news landed this week around AI-assisted coding!

Cursor launched Composer, a new agent model that achieves frontier coding results while being 4x faster than similar models.

Cognition launched SWE-1.5, another agent model achieving near-SOTA performance while being capable of serving up to 950 tokens/second—that’s really fast! Cognition partnered with Cerebras Systems, the king when it comes to inference speed!

For reference, Sonnet 4.5 — which is widely regarded as the best coding model — achieves a 43.6% score in the SWE-Bench Pro benchmark. SWE-1.5 achieves 40%, but here’s the kicker: It does so 13x more quickly!

While it’s true that at that speed you want to be right rather than quick, it’s also true that these advancements allow you to try out many different paths at the same time and select the most appropriate!

Links in the comments!

Had a lot of fun this morning at GoDataFest.com with Anindita M. and Chozhan D M in a panel about the results of our Data & AI Monitor. We talked about data quality, responsible and ethical AI, sovereign cloud, and more.

Thanks XiaoHan Li for hosting us!

Claude skills are a big deal™️

Thanks to skills, you can reduce your multi-agent setup to a single agent with skills, greatly reducing complexity and increasing speed of execution.

In fact, if in the past you could have a number of agents each specialized in, for example, data analysis, getting data from a particular set of websites, making that data available in a dashboard, etc., with skills you can substitute all these agents with skills.

And skills are remarkably simple: just a markdown file with instructions, some (Python) scripts, and optional resources.

Can’t wait for all LLM providers to adapt their new pattern!

https://www.anthropic.com/news/skills

That’s your output when you’re an authority 💪🏼

Go Jetze Schuurmans

Oh the irony